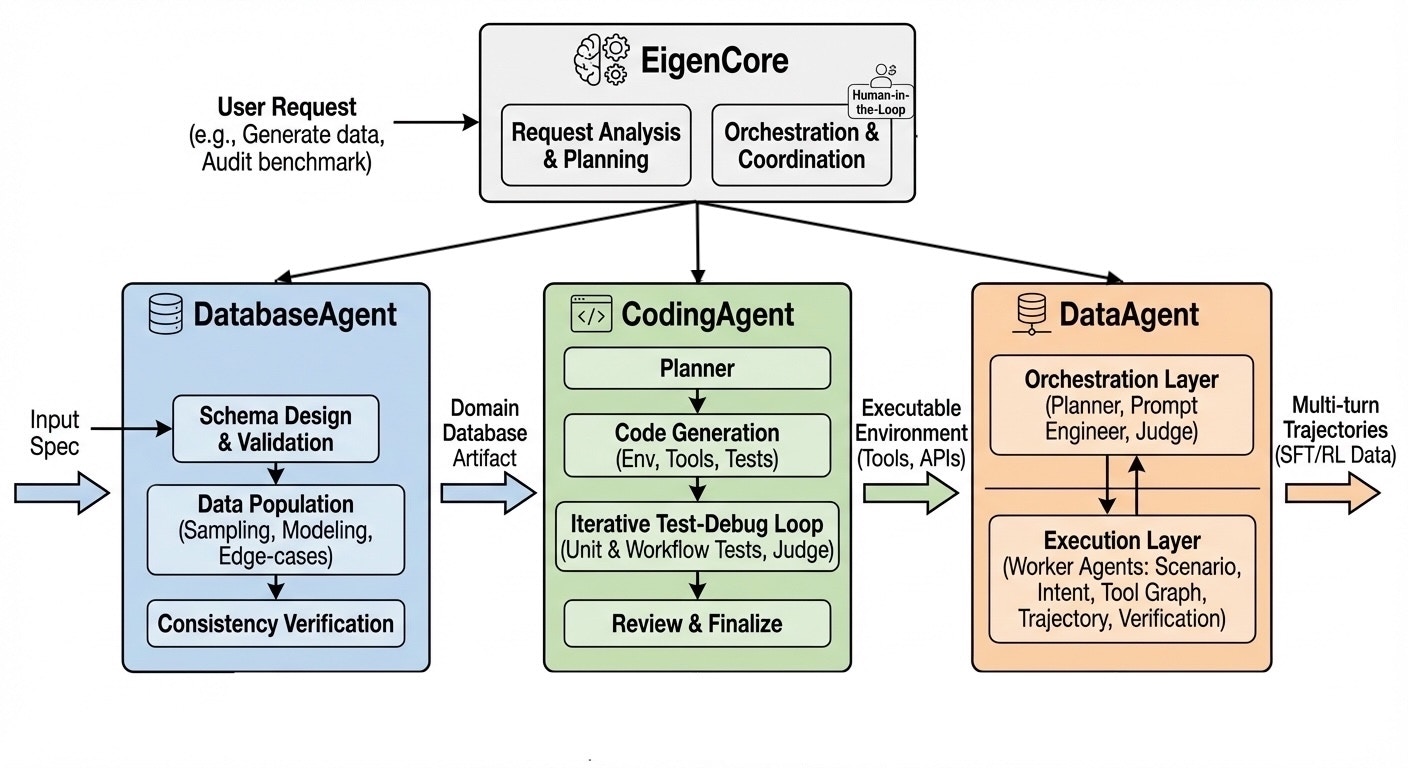

The EigenData Platform

EigenData is an integrated, self-evolving multi-agent platform that automates the full data lifecycle for function-calling agents — from constructing executable environments and backing databases to synthesizing, auditing, and repairing multi-turn trajectories. A top-level orchestrator, EigenCore, coordinates three specialized sub-systems:- DatabaseAgent — Constructs realistic domain databases (relational tables, JSON stores, key–value structures) with constraint-aware sampling, distribution modeling, and edge-case injection.

- CodingAgent — Generates verified executable environments (Python modules, MCP servers, REST APIs) through an iterative multi-agent test-debug workflow with judge-based fault attribution.

- DataAgent — Synthesizes, evaluates, and iteratively refines high-quality multi-turn function-calling trajectories via a hierarchy of specialized worker agents.

EigenCore: Orchestration

EigenCore is the user-facing entry point. It accepts natural-language requests — from narrow tasks (“repair the schemas in category X”) to broad directives (“generate a complete training environment and 1,000 trajectories for an airline-booking agent”) — and decomposes each into a dependency-aware execution plan. A multi-turn conversational interface progressively elicits the required parameters, and cross-component feedback ensures that fixes in one layer propagate to all dependent artifacts.DatabaseAgent: Domain Database Construction

The DatabaseAgent constructs realistic domain databases that underpin tool-use environments. Its pipeline proceeds through four stages:- Input Specification — Accepts domain descriptions ranging from natural language (e.g., “an airline database with flights, passengers, and bookings”) to detailed schema definitions with constraints and example records. Underspecified inputs are augmented with LLM-inferred defaults and presented for user confirmation.

- Schema Design & Validation — Translates the specification into a formal schema (tables, types, keys, constraints), then validates for structural soundness and API coverage.

- Data Population — Generates synthetic records using constraint-aware sampling, realistic distribution modeling, and deliberate edge-case injection (e.g., sold-out flights, canceled bookings) to ensure downstream training data covers failure modes.

- Consistency Verification — Runs referential-integrity checks and representative queries end to end. Violations trigger targeted regeneration of offending records rather than full repopulation.

CodingAgent: Environment & Tool Generation

The CodingAgent generates the executable software layer — tool implementations, simulation environments, and MCP servers — that expose domain functionality as callable APIs. It coordinates several specialized agents through an iterative generate-test-debug workflow:- Planner — Analyzes the domain specification and database schema to determine the set of functions to implement, their signatures, dependencies, and overall module structure.

- FileGenerationAgent — Generates complete Python modules from specifications. For environments, it produces stateful classes that load the domain database and expose tool functions. For MCP servers, it generates FastMCP-based servers with HTTP endpoints, session isolation, and auto-expiration.

- CodingAgent (inner) — The core code-generation agent that implements individual functions in multiple modes: generate (from scratch), correct (fix bugs), and refine (improve working code).

- TestingAgent — Automatically generates unit tests (happy paths, error cases, edge cases, type validation) and integration/workflow tests that verify related functions interact correctly.

- JudgeAgent — When tests fail, determines whether the fault lies in the generated code or in the generated tests — a critical distinction, since LLM-generated tests can themselves be incorrect.

- ReviewAgent — A quality gate that performs batch code review across all generated functions, checking for consistent patterns, proper state management, and adherence to specifications.

Two-Stage Testing Pipeline

The central mechanism is a two-stage testing pipeline with judge-based refinement:- Stage 1: Unit Testing — For each function, the system generates unit tests, implements the function, runs the tests, and enters a judge-based debug loop if tests fail. An inner cycle iteratively revises the implementation; if it exhausts attempts, the JudgeAgent attributes fault — regenerating either the code or the tests accordingly.

- Stage 2: Workflow Testing — After all functions pass unit tests, the system groups functions by call dependencies and generates integration tests for each group, verifying that state mutations from one function are correctly observed by another.

Workflow Modes

The CodingAgent exposes several high-level workflow modes:- Schema-Implement — Full implementation of a new environment from function schemas, through the complete test-debug pipeline.

- Code-Repair — Diagnosis and targeted repair of test failures or specification violations in existing implementations, with judge-based fault attribution to avoid chasing false positives.

- Code-Patch — Incremental updates when function schemas evolve (new parameters, changed types, updated contracts), patching only affected implementations and regenerating corresponding tests.

- Env-to-MCP — Converts an existing environment module into a standardized MCP server with FastMCP endpoints, session isolation, and transport configuration (stdio, HTTP, SSE).

- Format-Transform — Synthesizes deterministic Python conversion scripts to transform trajectory datasets between tool-calling formats (OpenAI Harmony, Anthropic Messages API, Qwen, DeepSeek, etc.), validated with structural conformance tests.

DataAgent: Multi-Agent Trajectory Engine

The DataAgent is organized into two internal layers:Orchestration Layer

The top-level orchestration layer plans workflows, writes prompts, and enforces quality control:- Workflow Planner Agent — Analyzes the user’s request and domain specifications to design an optimal generation workflow: which worker agents to invoke, in what order, and with which models.

- Prompt Engineer Agent — Auto-generates and iteratively revises detailed instructions for each worker agent, incorporating domain knowledge, tool schemas, and feedback from prior iterations.

- Judge Agent — Critiques intermediate outputs along multiple dimensions — factual accuracy, tool correctness, trajectory coherence, formatting compliance, and realism — forming a self-optimizing feedback loop.

Execution Layer

The execution layer comprises specialized worker agents, each handling a distinct stage of the generation pipeline. The Workflow Planner dynamically selects and sequences these agents based on the task:- RandomPoolAgent — Generates diverse scenario seeds (user personas, goals, constraints, contexts) to ensure broad coverage.

- UserIntentAgent — Synthesizes concrete user intents incorporating domain-specific details and task objectives.

- ToolGraphComposerAgent — Constructs a compositional tool-usage plan as a dependency graph over available APIs, with sanity checks for missing parameters and incompatible data flows.

- ToolGraphExecutorAgent — Executes the tool graph step-by-step, collecting intermediate results and recording structured traces that ground subsequent dialogue generation.

- UserSimulatorAgent — Simulates realistic user behavior and dialogue turns, maintaining persona consistency across multi-turn interactions.

- TrajectoryAgent — Orchestrates the assistant side of the conversation, incorporating function calls at appropriate turns and interacting with the UserSimulator to produce complete multi-turn dialogues.

- DialogConversionAgent — Converts raw trajectories into standardized formats (e.g., OpenAI Harmony, Anthropic Messages API) for model training or evaluation.

- VerificationAgent — Validates dialogue quality, tool-call correctness, goal completion, and formatting compliance, producing structured diagnostics.

- VerificationFunctionAgent — Synthesizes executable Python verification functions for each instance, encoding correctness criteria that serve as reward signals for downstream RL training.

- ModifyAgent — Applies targeted modifications guided by verification diagnostics — fixing formatting, rewriting inconsistent turns, or updating failed tool calls — enabling iterative refinement.

- DialogAnalyzerAgent — Segments conversations into safe zones, affected turns, and candidate zones when schema changes occur, enabling surgical trajectory repair.

Two-Phase Self-Evolving Strategy

EigenData uses a two-phase strategy for data synthesis at scale:- Phase 1: Pilot Optimization — Generates small batches (e.g., 5–20 samples) and engages the Judge and orchestration agents in iterative refinement, incorporating both automated critiques and optional human feedback. Prompts and workflows are tuned until quality stabilizes.

- Phase 2: Large-Scale Generation with Online Monitoring — Uses the optimized configuration for full-scale production. Monitoring mechanisms (Judge + VerificationAgent) continuously evaluate quality; if drift is detected, the system pauses and re-enters Phase 1 locally.

From EigenData to EigenData-CLI

EigenData-CLI adds a chatbot layer on top of the EigenData platform. Instead of configuring agents directly, you interact with a conversational interface that translates natural-language requests into the orchestration commands that drive the underlying multi-agent system. This makes the full power of the EigenData framework accessible from your terminal — no manual prompt engineering required.Available Features

The CLI currently exposes the DataAgent sub-system’s core workflows, tailored to different stages of the data lifecycle:- Schema Polish — Iteratively refines API/tool schemas via a verification–modification loop, correcting type mismatches, clarifying parameter descriptions, and resolving ambiguities.

- Data Generate — Synthesizes multi-turn function-calling trajectories from scratch using the two-phase self-evolving strategy, with support for both top-down (intent-driven) and bottom-up (tool-graph-grounded) generation.

- Schema-Triggered Patch — When function schemas evolve (renames, new parameters, type changes), surgically reconstructs only the affected trajectory portions — avoiding full dataset regeneration.

- Data Repair — Detects and resolves errors in existing trajectories through targeted segment repair, preserving valid conversation context while regenerating problematic turns.

- Data Audit — Systematically audits datasets against function schemas and business rules, producing structured reports with per-trajectory diagnostics, coverage analysis, and quality metrics.

Planned Features

The following capabilities are under active development and will be available in future releases:- Database Construction (DatabaseAgent) — Automated generation of realistic domain databases with schema design, constraint-aware data population, and consistency verification.

- Environment & Tool Generation (CodingAgent) — Verified executable environment generation through iterative test-debug workflows, including Python environment modules, MCP servers, and test suites.

- Code Repair & Code Patch (CodingAgent) — Diagnosis and targeted repair of implementation bugs, and incremental code updates when schemas evolve.

- Format Transform (CodingAgent) — Deterministic conversion of trajectory datasets between tool-calling formats (OpenAI Harmony, Anthropic Messages API, Qwen, DeepSeek, etc.) via synthesized conversion scripts.

To learn more about the research behind EigenData, see our papers: